Oh my Ansible!

I've been meaning to write about Ansible for a while because it's one of those tools that has genuinely changed how I work day to day. If you've read my previous posts about Proxmox and Docker you'll have a sense of the kind of infrastructure I'm running. Ansible is the thread that ties a lot of it together, and once I started using it properly I couldn't imagine going back to doing things manually.

So what is Ansible?

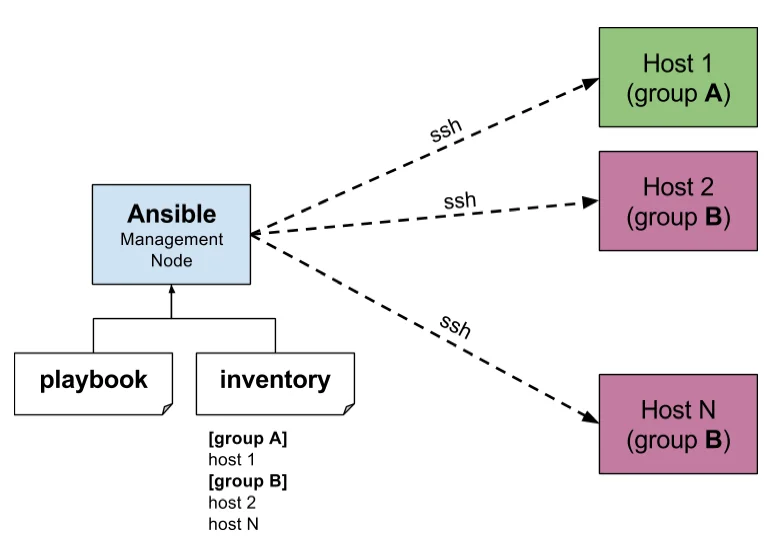

Ansible is an open source automation and configuration management tool. At its most basic level it lets you define what you want your infrastructure to look like and then makes it so. You write playbooks, which are essentially instructions written in YAML, and Ansible executes them against whatever hosts you point it at. No agent required on the target machines, it works over SSH which means there's almost zero barrier to getting it running against existing infrastructure.

The concept it's built around is idempotency, which is a fancy way of saying that running the same playbook twice should produce the same result as running it once. Ansible isn't just executing commands, it's enforcing a desired state. If the state is already correct it does nothing. If it isn't it fixes it. That distinction matters a lot in practice.

It's agentless, it's readable, it's got a massive module ecosystem, and it integrates with pretty much everything. That last point is what makes it so powerful in a real infrastructure context.

What I actually use it for

This is the part I want to spend some time on because I think the best way to understand what Ansible can do is to see it in a real workflow rather than just talking about it abstractly.

My Proxmox environment is almost entirely managed through Ansible at this point. When I need a new VM I don't log into the Proxmox web interface and click through the creation wizard. I run a playbook. Ansible hits the Proxmox API, provisions the VM with the right specs, and that's the starting point. But the provisioning itself is only the beginning of what happens.

As part of that same workflow Ansible connects to my IPAM system, IP Address Management, queries it for an available IP in the appropriate subnet, reserves it, and assigns it to the VM automatically. So by the time the VM is up it already has a properly tracked, reserved IP address with no manual intervention. No checking a spreadsheet, no logging into a separate system, no risk of IP conflicts because someone forgot to update the records. It just happens, correctly, every time.

From there Ansible handles the initial configuration of the VM, desired state configuration, getting it set up the way it should be. OS settings, base packages, security configuration, whatever the standard baseline looks like for that type of machine. Every VM that comes out of this process is consistent because they're all built from the same playbooks. There's no drift between machines because someone configured one slightly differently by hand.

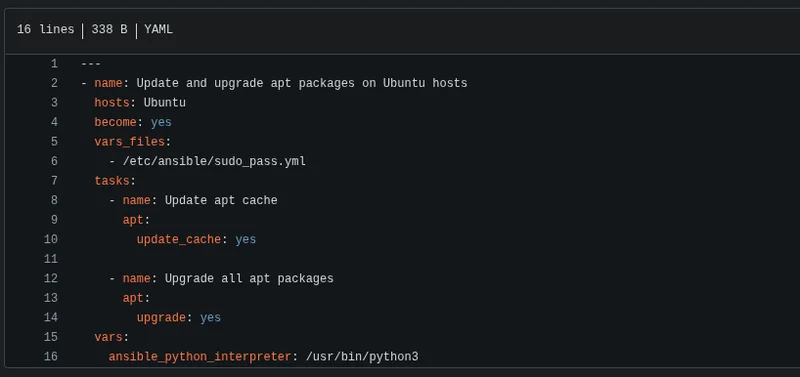

I also use Ansible for rolling out and updating my monitoring agents across VMs. Rather than logging into each machine individually or maintaining some fragile custom script to do it, a single playbook handles the whole fleet. Same for Linux updates, I can patch all my VMs in a controlled, documented, repeatable way without touching each one manually.

And then there's decommissioning. When a VM needs to go, Ansible handles that too. Removes it from Proxmox, cleans up the IPAM reservation, takes care of everything that needs to happen on the way out. The same level of automation on the way in as on the way out.

The whole thing is fully autonomous. I've got this running to the point where the amount of manual work involved in spinning up or tearing down infrastructure is almost nothing. The human input is deciding what needs to happen. Ansible figures out how to make it happen and then does it.

Why idempotency actually matters

I mentioned idempotency earlier and I want to come back to it because I think it's underappreciated by people who haven't used a proper configuration management tool before.

In a traditional world if you want to make sure twenty servers are configured consistently you either do it manually, which doesn't scale and introduces human error, or you write scripts that run commands and hope they handle edge cases properly. The problem with scripts is they're usually written assuming a clean starting state. Run them against a machine that's already partially configured and things break in unpredictable ways.

Ansible doesn't work like that. A playbook describes what the end state should look like, not the steps to get there. Whether you're running it against a brand new VM or one that's been running for two years, the result is the same. It checks the current state, identifies what needs to change, and makes those changes. Nothing more. That reliability is what makes it suitable for production automation rather than just handy scripts.

The integration angle

What really unlocks Ansible's value in my setup is how well it integrates with everything else. The Proxmox API integration, the IPAM integration, these aren't hacks or workarounds, Ansible is designed to integrate with external systems. There are thousands of modules covering everything from cloud providers to network devices to monitoring systems to databases. If you need to automate something that involves multiple systems talking to each other, Ansible is almost always going to have a clean way to do it.

This is what separates it from just writing a bunch of shell scripts that do similar things. Shell scripts work until they don't, they're hard to read six months later, and composing them into complex multi-system workflows gets messy fast. Ansible playbooks are readable, structured, and maintainable. Someone else can look at a playbook and understand what it's doing without needing to reverse engineer it.

Why you should know this

Honestly if you're working in IT infrastructure and you're not using some form of configuration management and automation tooling you're making your life harder than it needs to be and accumulating technical debt in the form of manual processes that will eventually hurt you.

Ansible is a particularly good starting point because the barrier to entry is genuinely low. You don't need to restructure your infrastructure to start using it, you don't need to install agents everywhere, and the YAML syntax is readable enough that you can get productive with it relatively quickly. You can start small, automate one repetitive task, understand how it works, build from there.

The ceiling is also very high. What I've described here, fully automated VM lifecycle management integrated across multiple systems with zero manual intervention, is something I built up incrementally. It didn't start that way. It started with a few simple playbooks and grew as I found more things worth automating.

That's the thing about Ansible. Once you start using it properly you start seeing manual processes everywhere and you want to automate all of them. That's a good instinct to have.